VR Gesture Interface based on Human-animal Interaction

By Ziyin Zhang, M.S. Digital Media at Georgia Tech

Master's Project Committee:

Michael Nitsche (Chair),

Janet Murray, Jay Bolter

Problem

There currently is not a standard unified interface in Virtual Reality (VR), and even though interfaces start to allow gesture-based interaction many user interfaces are directly borrowed from 2D surface interactions. Too few experiences fully leverage the embodiment in VR. To address those issues and to explore a new design space, this project proposes an alternative gesture control scheme based on human-animal interaction. A interactive prototype is presented where the player use gestures to command an animal companion to finish tasks.

The song selection menu in Beat Saber, one of the most popular VR games, is based on user interface design for 2D surface interactions

VR provides the sense of embodiment by mapping the player’s movement to the avatar in virtual space. However, most experiences do not utilize this feature, and the player’s embodied virtual body is not being used. Furthermore, the player manipulates the VR environment by pressing buttons on the controllers, which could potentially break immersion.

Most VR controllers’ button mapping is similar to traditional game controllers. HTC Vive Controllers being the exeption. However, it does requires users to learn the mapping.

Since VR interaction is particularly powerful when it uses embodiment, holding on to traditional game controllers could potentially break the immersion.

Background

Interaction Design Framework

Shneiderman defined three principles of direct manipulation for designing a controllable user interface [shneiderman1997direct]. His notion of direct manipulation was to differentiate from the traditional command-line interface at the time. It still provides valuable insights on how to design for a predictable and controllable user interface:

- Continuous representation of the objects and actions of interest.

- Physical actions or presses of labeled buttons instead of complex syntax.

- Rapid incremental reversible operations whose effect on the object of interest is immediately visible.

Gesture Interface

Most related work in gesture-based interaction design is conducted on 2D surfaces like computers and tablets. Devices used to track gestures ranges from touchscreens, depth sensors, and cameras with computer vision, etc. However, a few works attempt to apply gesture controls to VR.

Lee et al. provided a set of hand gestures to control avatars in their Virtual Office Environment System (VOES). Most of the gestures match the established mental models, for examples, the “Wave Hand” gesture is waving hand and the “Stop” gesture is a fist. They provided a good pipeline for how to process gesture data and how to break down the different data and use different classifiers [lee1998control].

Related Works in VR Games

The Unspoken is a VR action game in which players duel with other magicians by casting spells using gestures. The gesture interactions take advantage of embodiment in VR, which makes the game much more compelling.

Falcon Age provides great examples of communicating and interacting with an animal companion–a falcon. As you play and fight enemies with your virtual falcon, the interactions involves various gestures commands such as whistle to call it over and point to direct its action. Although this game presented multiple ways to interact with the falcon, only a few are incorporated into the actual gameplay.

Approach

In order to create an effective VR interface with embodied gesture-based interactions, I decided to design a gesture interface based on human-animal interactions. Human-animal relationships are wide-spread and - as we do not share a single language - they often include gestures as part of our communication. Because these gesture references are already in place, it is more natural for the players to do these gestures and easier to memorize them. In this sense, the gestures are more effective and ready to be adapted to VR.

Human-animal Interaction

Humans have always been interacting with animals, from animal husbandry to keeping them as animal companions.

Through analyzing dog training procedures and video documentary on eagle hunters, such as The Eagle Huntress and Mongolia: The Last Eagle Hunters, lots of overlaps in tool usage and training were identified. Both dog training and eagle hunting use a combination of voice and body gesture. The process of raising and bonding with the animal is essential to effective communication with the animal. The design phase explore the development of basic interactions based on human-animal gestures.

Design

The design of the gesture interface went through several iterations before settling down on human-animal interactions the final prototype version.

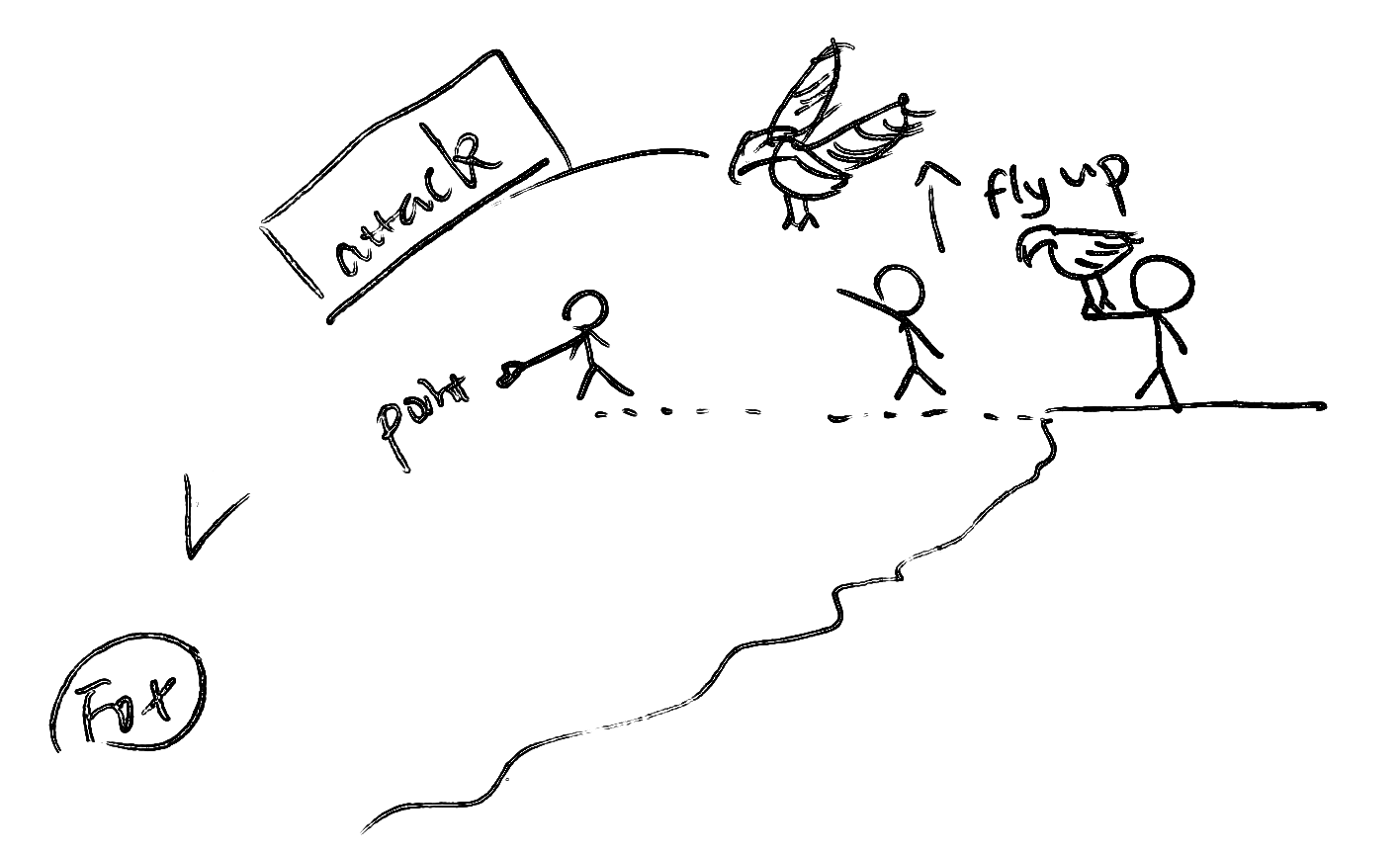

Gestures inspired by Eagle Hunters

The first design of the gesture interface was inspired by eagle hunters. Through analyzing documentaries on Mongolian eagle hunters, the body languages used by eagle hunters to communicate with the eagles were identified. Shown in the sketches below, the main gestures to interact with the bird companion are:

- Direct: direct the bird companion to interact with a target. Similar to the way eagle hunters kept their eagles on their arms, the bird companion starts off on the player’s arm. Once the player raises an arm, the bird will fly upwards, awaiting further directions. The player then uses the point gesture to direct the bird to fly over and interact, or in this case, attack the target.

- Return: instruct the bird companion to return after (or during) the interaction. Once the bird has finished interacting with the target, the player can instruct it to return by swinging the arm in a backwards motion. Once the bird starts to fly back, the player can raise the arm for the bird to stand on.

Sign language Inspired Gesture Design

Through learning basic American sign languages, I was especially drawn to the concept of Spatial Grammar. As sign languages are not used in a linear sense, the subject and object of a verb cannot be derived from word order, hence the usage of spatial grammar. Usually, before signers describe an event, they establish the spatial relationship of the entities by “putting” each entity on an imaginary plane in front of them.

Inspired by this concept, I designed two interaction scheme that make use of spatial grammar to define entities. Once the entities are defined, the players can perform gestures that describe the interaction between the entities, and informs the direction of the action if necessary.

User-defined Gestures with Reward-based Behavior Modification

The idea is to incorporate the process of defining gestures into the gameplay. This is inspired by reward-based behavior modification which is used by both dog trainers and eagle hunters.

During the process of raising and bonding with the animal companion, the player uses food as rewards to teach the companion gestures for the following commands:

- Focus: get the attention of the companion.

- Direction: instruct the companion to face the target.

- Action: instruct the companion to go to the target and interact with the target.

However, since the implementation of user-defined gesture recognition is not feasible within the time constraint, this design was eventually changed to pre-designed gestures based on human-animal interaction.

Final Design: Gestures based on Human-animal Interaction

The final design was inspired by how I interact with my dog at home. Here, the user-defined gestures emerge in a kind of shared condition between the dog and the owner. In my case: the rotation of my hand leads to the dog to roll over on the floor.

The design targets for the gesture interface are defined in the table below:

| Interaction | Pet Gesture | Hand Gesture |

|---|---|---|

| SELECT | Call name to get its attention | Point |

| ACTIVATE | Draw circles to make it roll over | Circle |

| ACTION | Throw sticks for it to fetch | Throw |

| CANCEL | Call name while clapping Hand | Stop |

Implementation

The gesture recognition system is implemented using an HTC Vive headset, two Valve Index controllers, SteamVR Unity Plugin v2.5 and Unity Engine. Unity made the implementation of the VR visualization itself relatively simple but the project had to solve a number of challenges to implement particularly the gesture recognition.

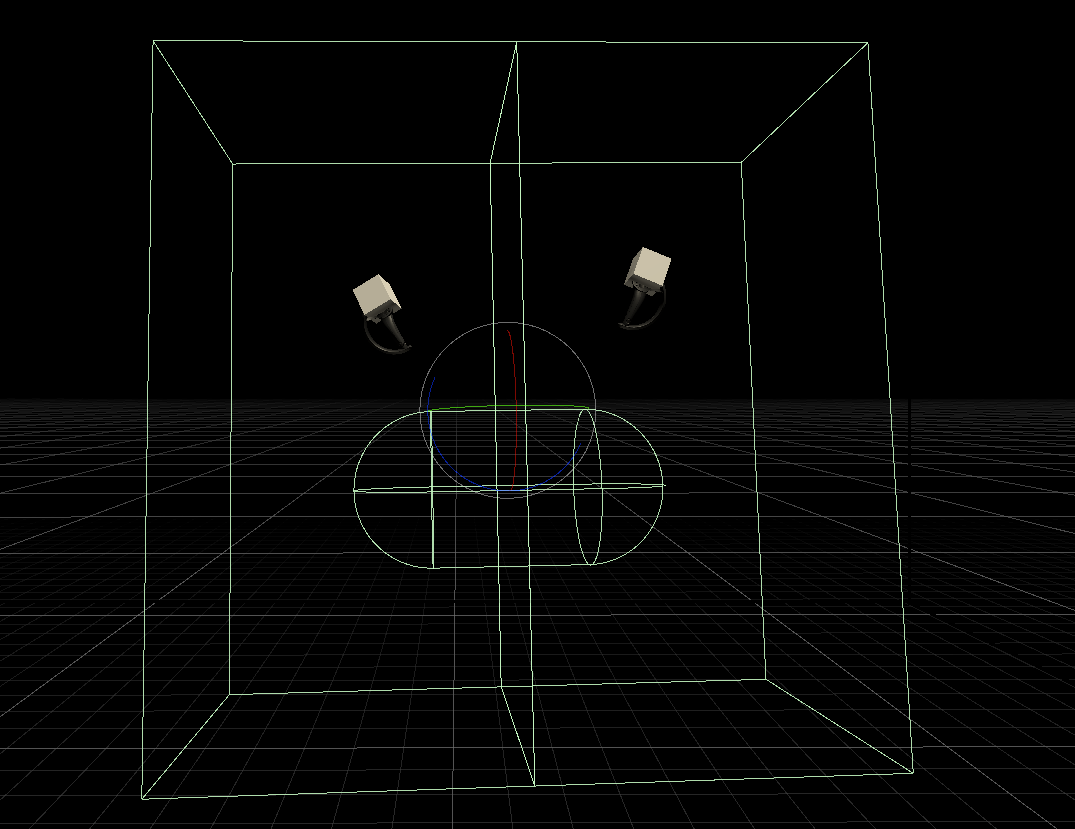

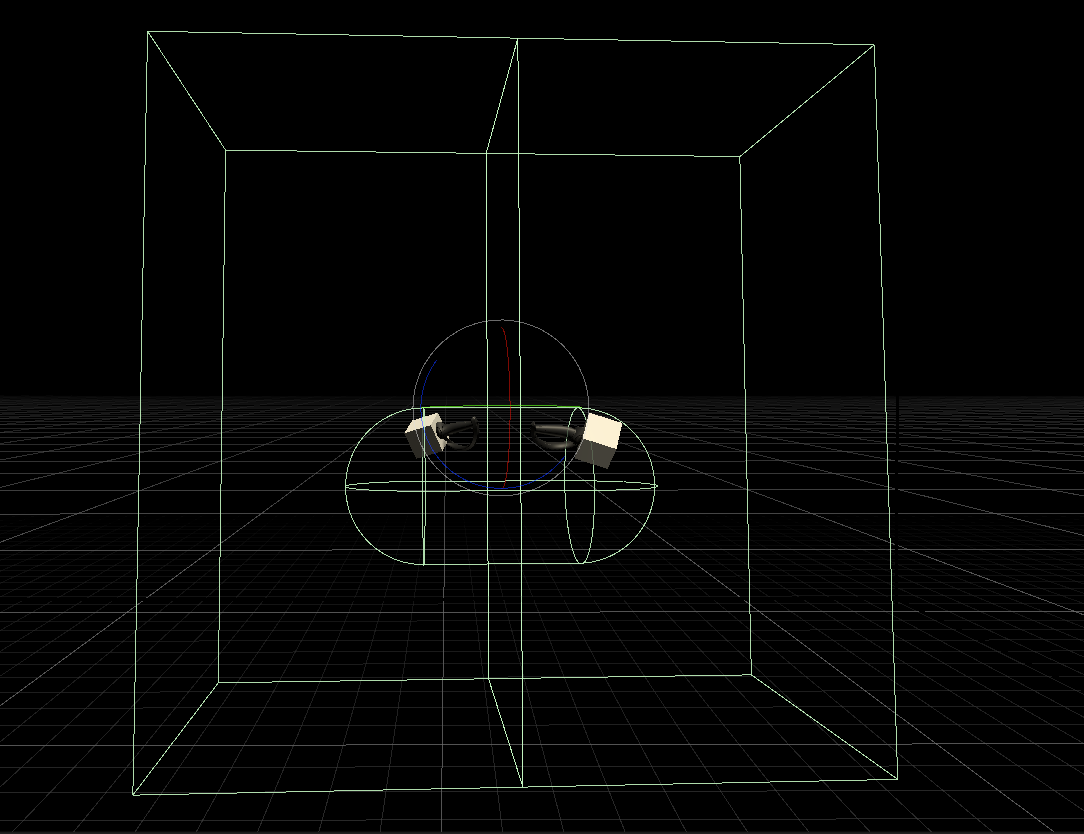

First Stage: Arm Gesture Detection

The first prototype is a simple scene where the relative position of hands with other parts of the body is used to detect arm gestures. This stage served as an exploration of the technical design space.

The relative position of hands (represented by the two small cubes in the middle) compared to the position of the head (represented by the sphere) and chest area (represented by the capsule) is used to detect simple gestures. The two outer boxes mark the areas for the left and right arm span, used to detect which side of the body.

Second Stage: Gesture Recognition based on TraceMatch

Basic gestures like SELECT and ACTIVATE were implemented in this stage. The detection of hand gestures involved using the “finger curl” data from the Valve Index Controller, which can be retrieved via SteamVR Unity Plugin.

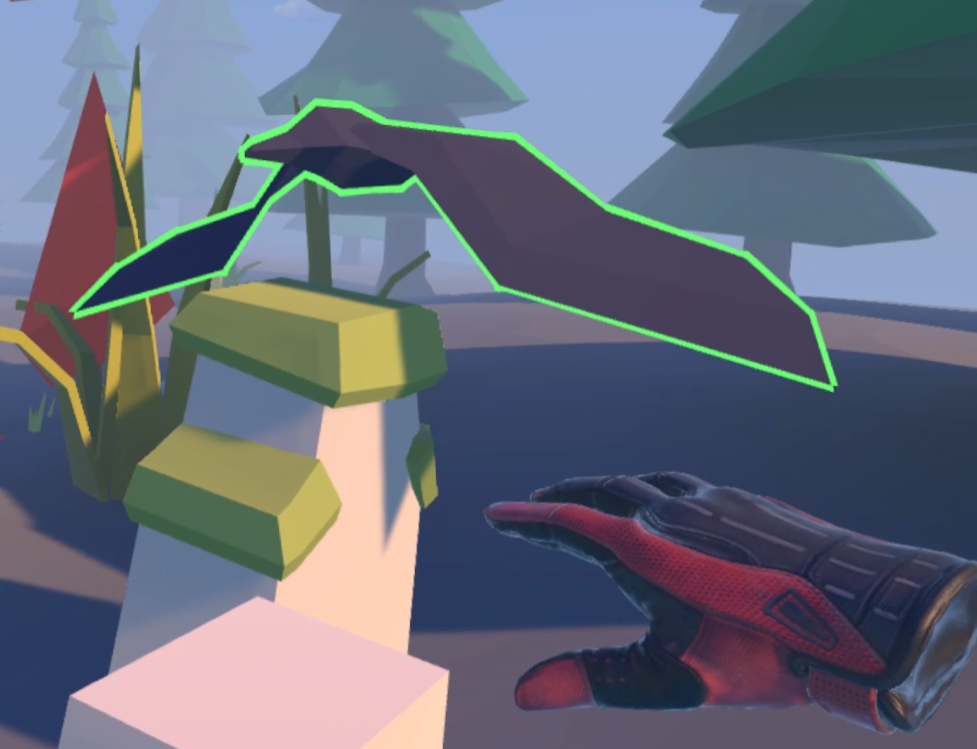

SELECT is detected when the player’s hand is doing a pointing gesture. While the user is doing the SELECT gesture, the system uses Raycast from the Unity physics engine to detect where the user is pointing. If the Raycast hits an object that can be interacted by the user, it will start glowing blue to indicate that it is interactable. This responds again to Shneiderman’s principle of direct feedback.

For the ACTIVATE gesture, the system needs to detect when the player’s hand is drawing circles. The algorithm is inspired by the motion matching process described in TraceMatch [clarke2016tracematch]. The earliest data point is marked as the start of the circle, which is used to calculate the angle of the curve. Once the angle between the start of the circle and the latest data point has accumulated over 360◦, this means the hand has finished drawing a full circle, and thus the ACTIVATE gesture is fired.

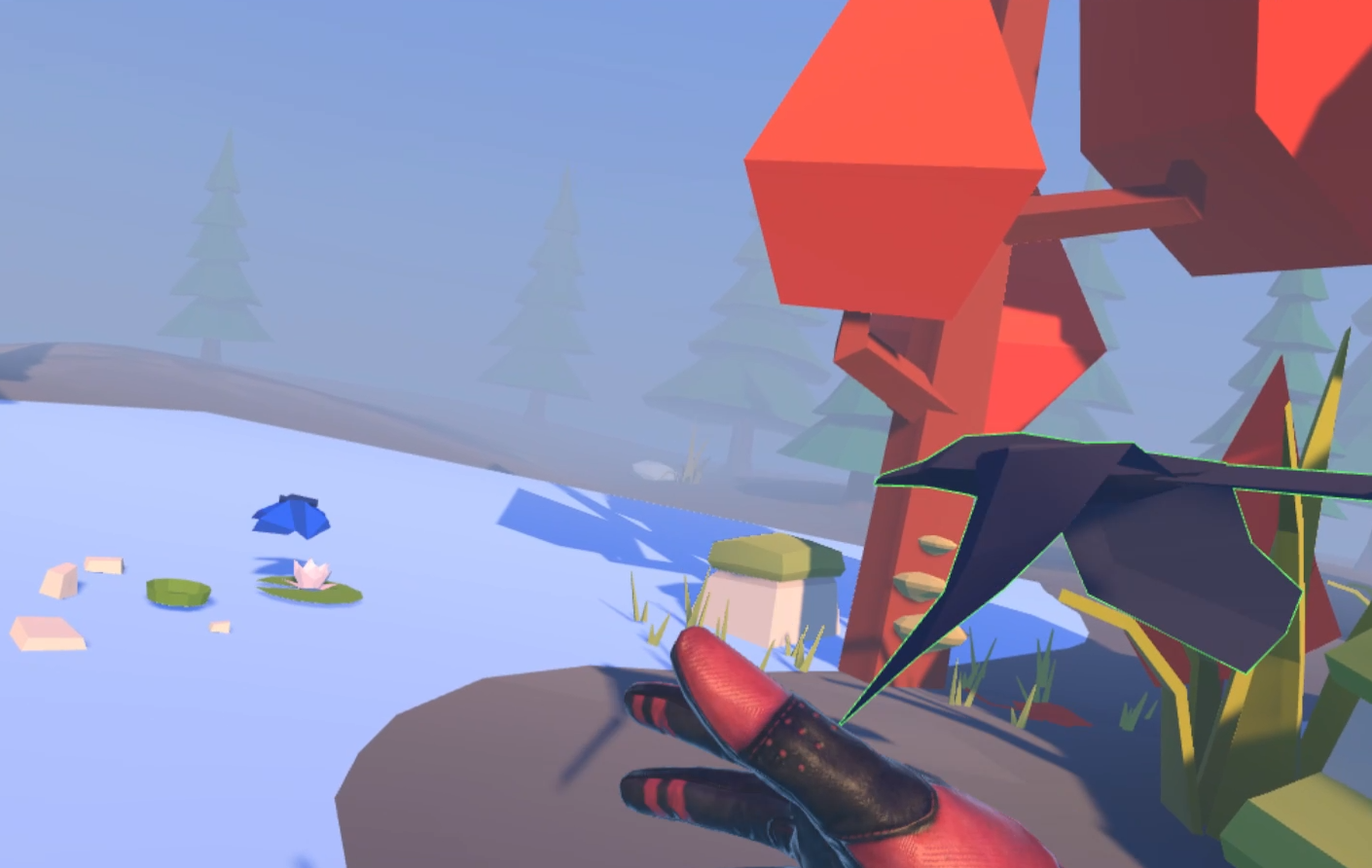

Final Prototype

As the design emerged and the technical gesture recognition fell into place, the VR world was developed and an animal companion was added into the project. This stage consisted of a gradual build up of a 3D world that would showcase the gesture-based interaction in a VR condition. It also required gradual adjustment of the gestures to the actual VR condition as it became functional.

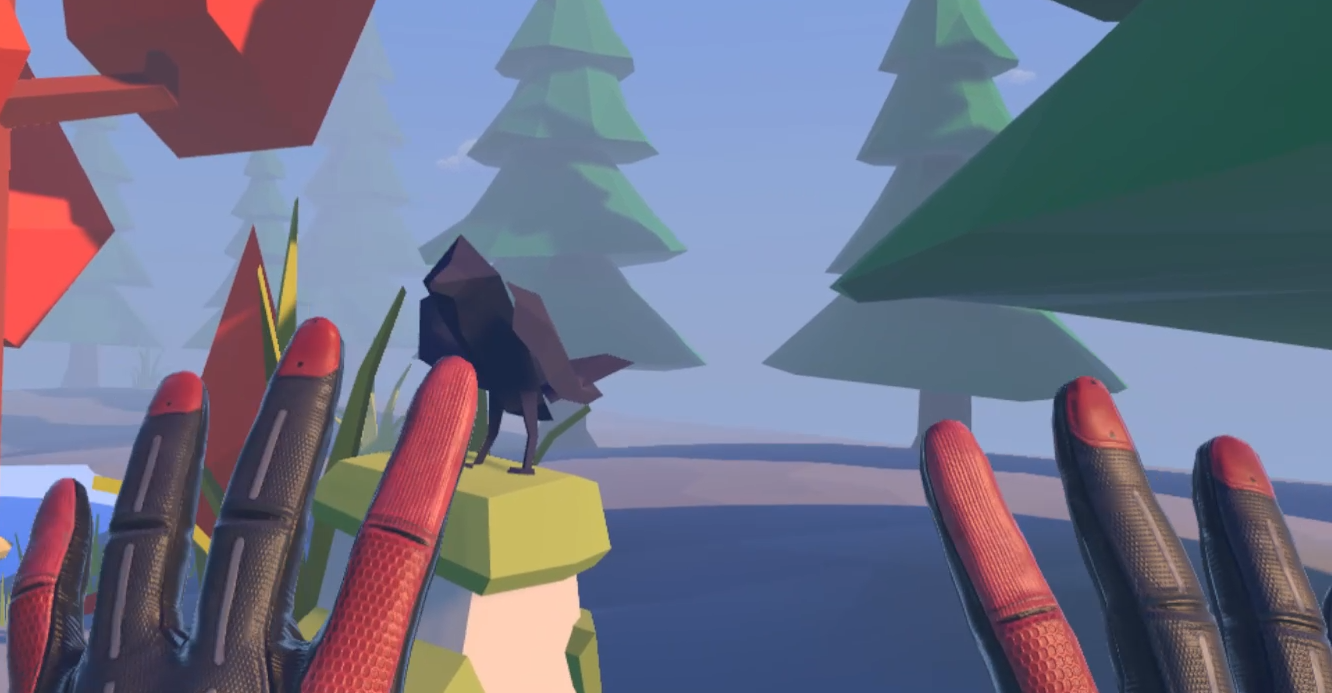

To better indicate that the gesture ACTIVATE is triggered and the companion is activated, the animation of the companion changes to a different state. In this case, the bird companion changed from a standing pose (left image) to a flying pose (right image), showing that it is ready to do the next action. Additionaly, the color of the outline is changed to bright green for better visibility.

The ACTION is a throwing gesture. It was first implemented using the TraceMatch algorithm. Once the hand is detected as moving in a circular motion, the angle of the curve is calculated. Unlike ACTIVATE which requires a full circle, ACTION is detected when the angle of the curve has accumulated over 60◦.

However, through extensive testing, this version of the ACTION gesture detection turned out to not work very well. The throwing motion did not match a circle most of the time, as it might be a short curve in the shape of an ellipse or in a straight line. Therefore, in the final version the ACTION gesture is changed to: close the palm to indicate the start of the throwing gesture (to mimic the action of throwing items held in the hand), then open the palm afterwards to indicate the end of the gesture (the hand has released the items thrown), at both point the hand positions will be recorded. The direction of the ACTION gesture is then calculated using the vector bounded by the two positions.

The last gesture implemented is CANCEL, which is a “stop” hand gesture–both palms open with the tip of the hands pointing upwards. This is implemented by calculating the rotation of both hands to see if they are both within the threshold of the upward angle.

Project

Video Demo

Documents

For detailed documentations, please refer to: Project Documentation and Defence Presentation. The initial idea of this project is presented in: Project Proposal.

Evaluation

No formal evaluation was possible, due to the Covid-19 pandemic. But the targeted design criteria would focus on compliance with Shneiderman’s principles of designing comprehensible, predictable and controllable user interfaces.

User studies would need to evaluate the gesture interface presented in the prototype along those principles. The following tasks could be designed for players to complete, during which think-aloud data and video recordings of the physical and the digital performance (via screen capture) could be collected for qualitative studies:

- Use gestures to command the companion to fetch an item (no time constraint).

- As part of the gameplay, a fox has captured the player’s valuables. Before the fox run a way, the player needs to use gestures to direct the companion to attach the fox, then fetch the valuables back to the player (with time constraint of 1 minute).

For quantitative study, collect and analyze the result of the following questionnaires for each user: NASA TLX (Task Load Index), CSI (Creativity Support Index), and UES (User Engagement Scale) for VR game with gesture hand interface.

Conclusion

The current prototype focused on interaction with a single animal companion. While the final prototype allows user to select different birds to activate one and direct its action, it would be interesting to test with more types of interactions. This could include:

- Interacting with multiple companions.

- Interacting with non-animal targets.

- Manipulating abstract targets (e.g. menu interfaces).

- Using different sets of gestures based on interaction with other animals (e.g. horses?).

The underlying gesture recognition algorithm can be easily adapted to other types of sensor data. With small modifications, it should enable simpler mobile VR systems that do not have full spatial tracking to have a richer gesture based interactions.

Summary

Most VR games do not take advantage of embodiment in VR or use gesture interfaces to provide embodied interactions. Traditionally, gesture control is not a viable way of control because of the lack of affordance, memorability, and discoverability. This paper explored an alternative gesture interface based on human-animal interaction. The VR platform has the advantage of displaying gesture prompts with real time visual feedback that makes it uniquely suitable for using such gesture control. This paper demonstrated a kind of gesture interface in this proof-of-concept prototype.

This paper aimed to provide insights on the user experience design of VR and AR gesture interfaces, as well as to inspire future VR experiences to use more embodied interactions like gesture controls